Teams usually breathe out once the Register of Information (RoI) is accepted, however, that is often the moment the real scrutiny starts.

The RoI exists to support supervision and the annual process to designate critical information and communication technology (ICT) third-party providers, so acceptance is only the point at which supervisors can begin using it for analysis rather than submission handling, as the RoI implementing technical standards make clear. Acceptance proves the file is ingestible; review tests whether the dataset is usable for analysis without manual reconstruction.

This article stays in that post-acceptance lane. The submission workflow is a different problem.

Supervisory first-pass triage (the joins they test immediately)

| What the reviewer tries to do | Which field or join is being pressure-tested | What follow-up it triggers |

|---|---|---|

| Aggregate providers | Provider identifier, identifier type, ultimate parent undertaking identifier | “Show this provider as one group relationship across entities.” |

| Trace the contract spine | Contractual arrangement reference number across B_02.01, B_02.02 and B_02.03 | “Which live arrangement is this, and why did the reference change?” |

| Reconcile accountability and use | B_03.01 signer records against B_04.01 service-user records | “Who signed, who consumes, and where is that relationship evidenced?” |

| Sanity-check critical or important function (CIF) records | Function criticality, reasons, reliance, substitutability, alternative-provider fields | “Why do similar services carry different CIF logic?” |

| Check downstream visibility for CIF-supported services | Whether the provider actually underpinning the service can be shown | “Which provider really sits underneath this dependency?” |

| Interpret coded values | B_99.01 internal definitions for closed-list values | “What does this value mean in the context of your group?” |

The six credibility tests supervisors run after acceptance

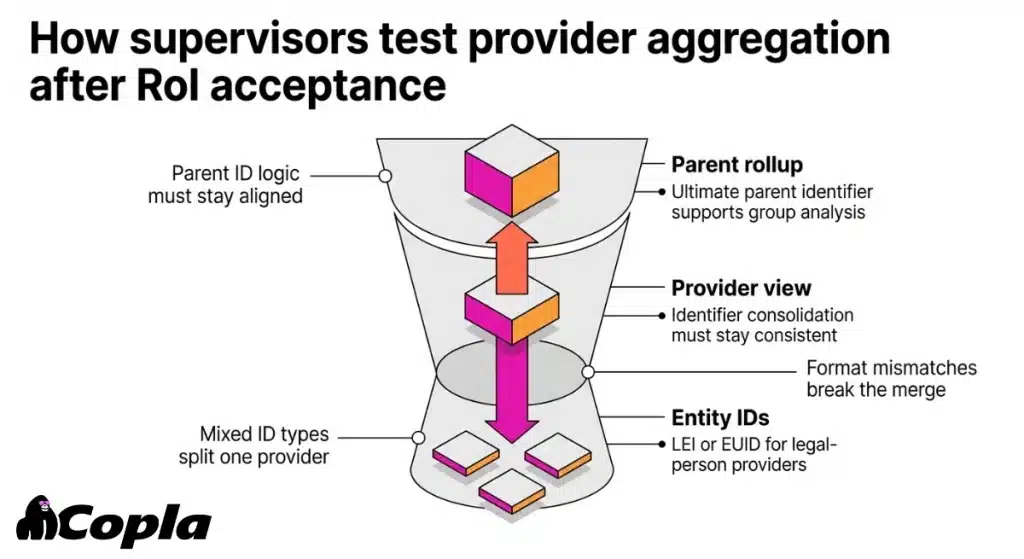

1. Provider aggregation fails when identifiers don’t consolidate to parent level

The first weak signal is usually not a missing row, but a provider that cannot be consolidated cleanly.

The same ICT third-party provider may appear under different code logic across entities, the parent grouping may not reconcile, or a commercial brand may be doing work that a legal-entity structure should have done. In practice, reviewers try to roll all records up to one provider and then up again to the ultimate parent undertaking. If the same provider splits across identifier types, formatting variants, or entity-specific IDs, the provider view collapses quickly.

The identifier rules are not loose.

Legal-person ICT third-party providers are identified with legal entity identifier (LEI) or European Unique Identifier (EUID), and where available both. The register also has to preserve consistency in the consolidation of identifiers.

At parent level, the ultimate parent undertaking code and its code type must match the identifier logic used for that parent undertaking.

The supervisory consequence lands one step later.

The DORA RoI reporting FAQ (19 March 2025) says the ultimate parent undertaking identifier is used in analysis to group relevant ICT third-party providers belonging to the same group. It also says that where a third-country LEI is unavailable, another identifier may avoid rejection but will still be highlighted as a data-quality issue.

So a record can pass intake and still be weak in analysis. That is also why the required data fields matter more than the file’s surface completeness.

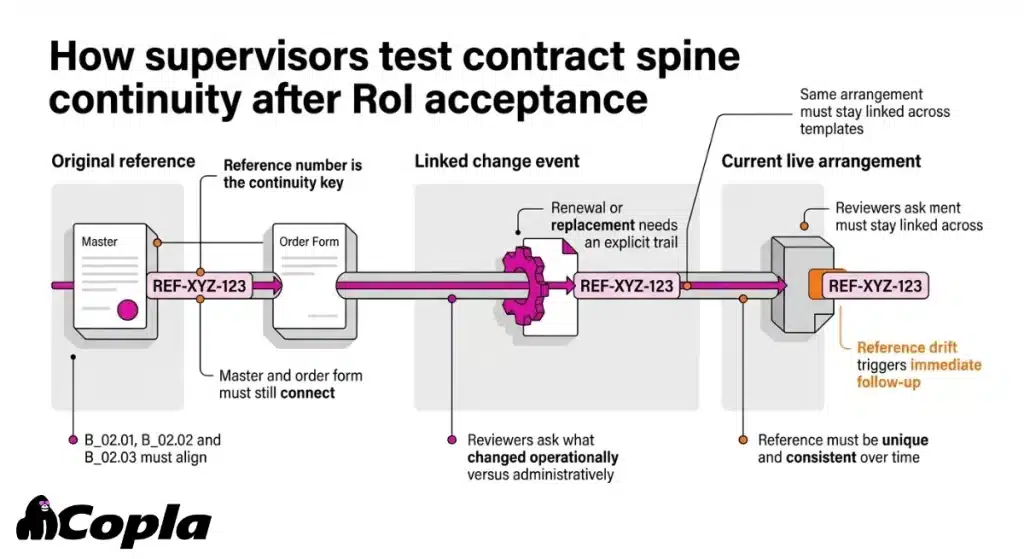

2. Contract spine traceability fails when reference numbers don’t stay stable across templates

The obligation is precise. B_02.01 requires the contractual arrangement reference number to be unique. It has to stay consistent over time. And it has to be used consistently across all templates for the same contractual arrangement.

B_02.01 also covers standalone arrangements, overarching or master arrangements, and subsequent or associated arrangements such as implementing arrangements and order forms.

Reviewers use the reference number as a continuity test.

The FAQ (19 March 2025) says the financial entity chooses that reference number and should ensure its consistency and uniqueness throughout the RoI, especially in a group.

What they are really checking is simple: can the register explain change without guesswork?

They will look for:

- a clear link between the old and new arrangement

- lifecycle facts such as renewal, replacement, or termination

- a register trail that still makes sense across templates

If that trail disappears, continuity stops being trustworthy.

Illustrative scenario

Reference drift across master + order form

A group reports a master cloud agreement in one cycle under reference CA-100. In the next cycle, the master agreement appears as MA-44.

The order form for the same service appears as CA-100-1, but the overarching contractual arrangement reference number no longer links those records consistently.

The next supervisory question is immediate: which live arrangement governs the service, and what changed operationally rather than just administratively?

Once the register cannot answer that on its own, follow-up starts. The first contract renewal usually exposes the problem.

That discipline usually comes from treating the reference number as a managed key, not a procurement label, with one owner, one change rule, and a required linkage whenever a new reference is introduced.

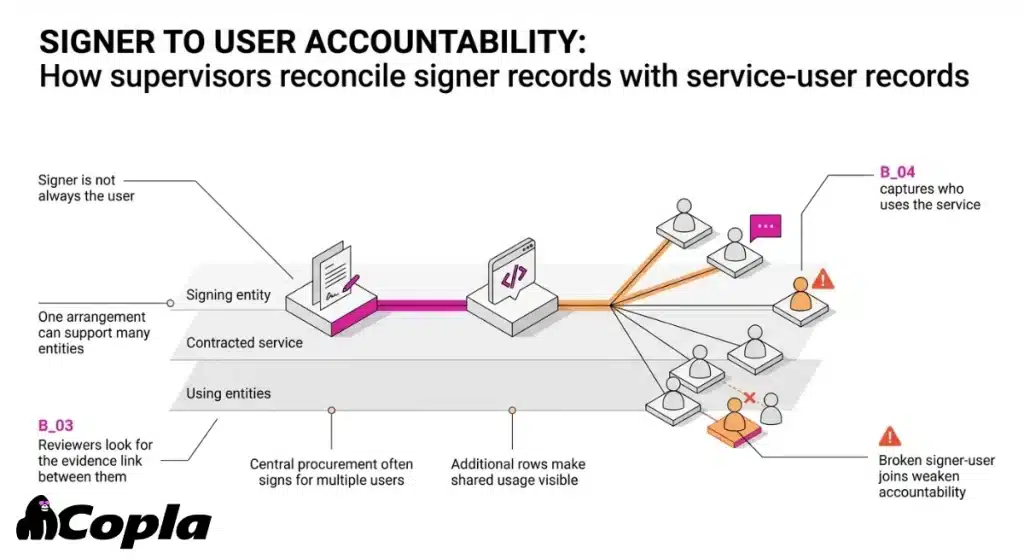

3. Signer vs service-user fails when accountability doesn’t match consumption (B_03 vs B_04)

This is a classic RoI problem.

A central procurement entity signs the arrangement. Several subsidiaries actually use the service. Or an intra-group service provider signs on behalf of multiple users. The record then has to prove that those views still belong to the same service relationship.

Reviewers try to trace one relationship from signer to contract to service to service user.

The templates split those roles deliberately.

B_03.01 captures the entities signing contractual arrangements. B_04.01 captures the entities making use of the ICT services. The instructions also say that, in consolidated contexts, the entity signing the arrangement is not necessarily the entity making use of the service.

The FAQ (19 March 2025) adds one practical point.

Where multiple entities use the same arrangement, additional rows should be added so the usage pattern is visible. Follow-up usually asks for the evidence link. Where in the operating model is the signer-to-user relationship defined? Where is it kept current?

This is one of the easiest ways for a RoI to look fine row by row and still fail as a dataset.

Copla Registry

Keep the RoI as a connected dataset

Copla Registry keeps signer, user, contract, and dependency relationships linked in one governed record — so the RoI holds together as a dataset, not just completed rows.

- Link signer, user, contract, and dependency relationships

- Maintain one governed, connected RoI dataset

- Preserve structure across updates and reporting

Procurement may hold the signed paper. ICT may understand the live dependency. Risk may own the reporting pack.

If B_03.01 and B_04.01 do not tell the same story, the reviewer cannot tell who committed contractually, who depends operationally, and how that dependency should be aggregated.

That goes straight to accountability. It is also the kind of operational friction that makes the RoI in practice much harder than the templates suggest.

The relationship holds better when signer and user are treated as a deliberate one-to-many structure, not collapsed into a “contract owner” shortcut.

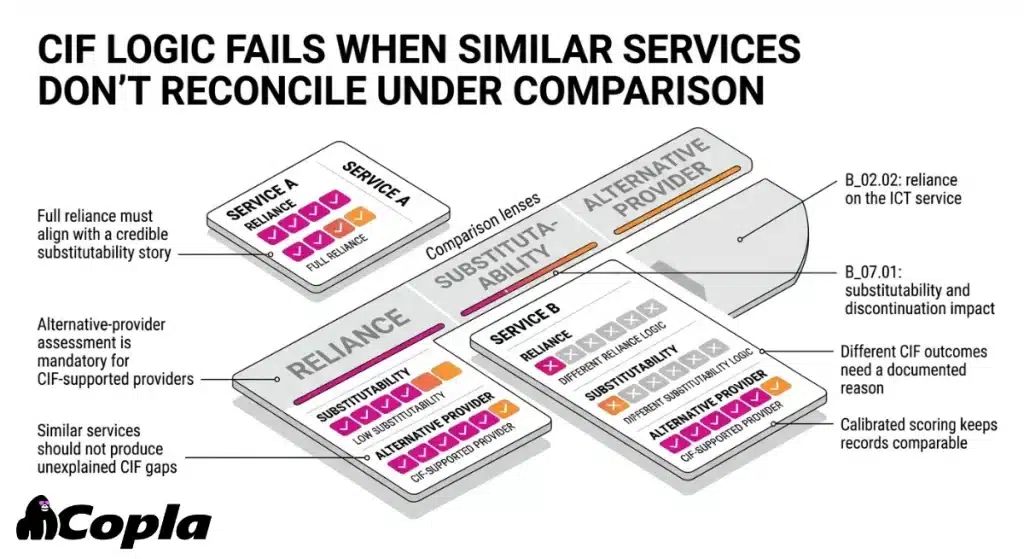

4. CIF logic fails when similar services don’t reconcile under comparison

One service is marked as full reliance. Another near-identical service is treated much more lightly. The rationale starts to thin out once the reviewer compares them side by side.

Reviewers can run that comparison because:

- B_06.01 captures whether the function is critical or important, and why

- B_02.02 captures the level of reliance on the ICT service

- B_07.01 captures substitutability, impact of discontinuing the service, reintegration complexity, and whether alternative ICT providers have been identified

For providers supporting a critical or important function, that alternative-provider assessment is mandatory.

After acceptance, supervisors are not asking whether a CIF rationale exists somewhere in the programme. They are asking whether it survives comparison.

What tends to trigger follow-up:

- similar services carry materially different CIF logic with no visible reason

- “full reliance” sits next to a weak substitutability story

- a provider is hard to replace in one entity and easy to replace in another, without a credible business explanation

What prevents this is a calibrated CIF methodology: the same scoring definitions, the same reliance and substitutability logic, and a documented reason whenever the record genuinely deviates.

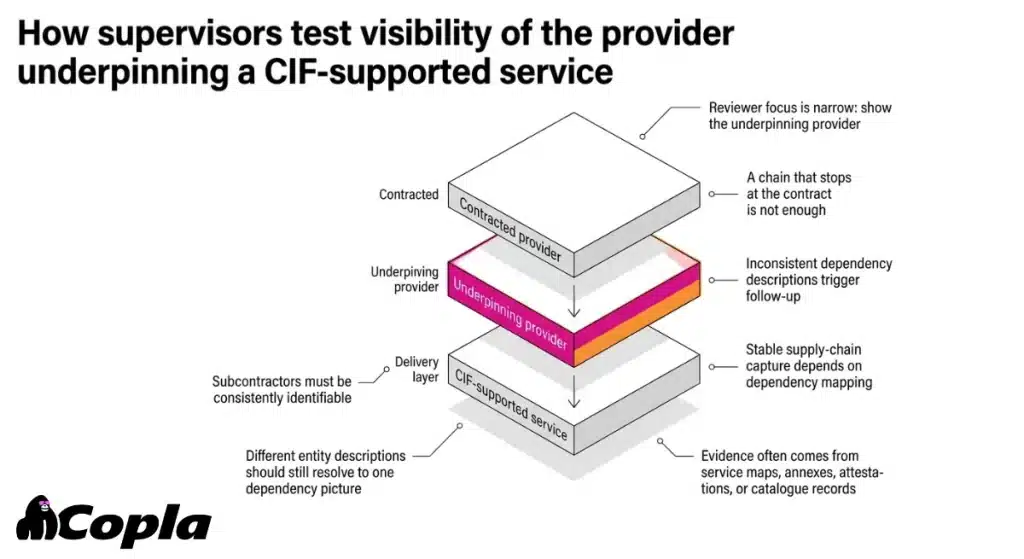

5. Supply-chain visibility fails when you can’t show the underpinning provider

For CIF-supported services, the reviewer-facing test is narrow: can the institution show the provider that actually underpins delivery?

Follow-up usually starts when:

- subcontractors exist but are not consistently identifiable

- the chain stops at the contracted provider even though another layer delivers the service in practice

- different entities describe the same dependency differently enough that the picture stops being trustworthy

The next request is usually for the operational record or dependency evidence showing who delivers that layer in practice. That can mean architecture or service mapping, contract annexes, provider attestations, or internal service catalogue records. The fuller service-chain mechanics sit in sub-processors under DORA.

This only stabilises when supply-chain capture is treated as dependency mapping, not as a recycled processor list or a contractual formality.

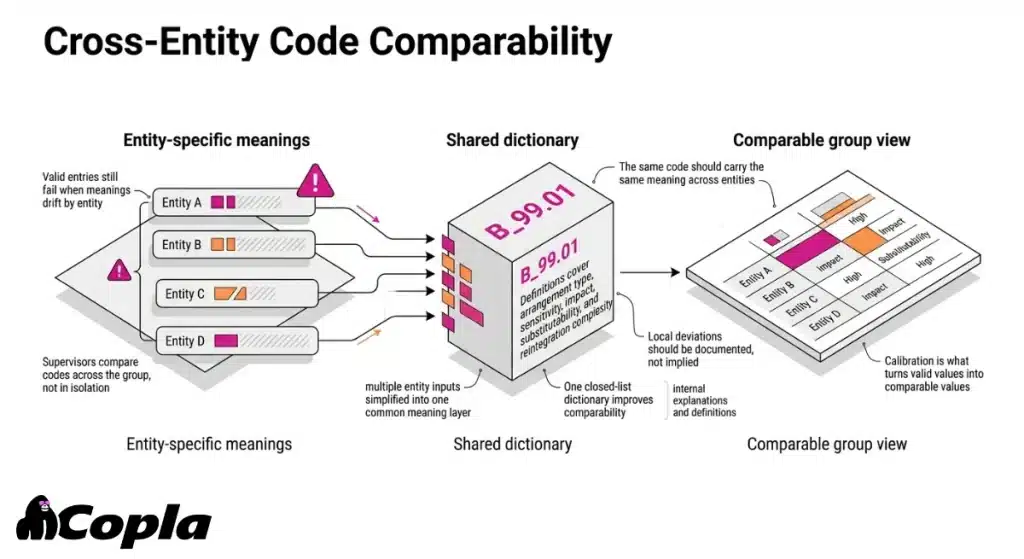

6. Coded values fail when “valid” still isn’t comparable (B_99.01)

Some fields are populated and technically valid, yet still impossible to interpret consistently across the group.

The test is cross-entity. Supervisors compare the same coded values across the group. They expect to remain confident that they mean the same thing.

Comparability depends on B_99.01, which requires financial entities to provide entity-internal explanations, meanings, and definitions of the closed-list values used in the register. That instruction covers:

- contractual arrangement type

- data sensitivity

- impact

- substitutability

- reintegration complexity

- related closed-list values

This is one of the quietest first-pass failures, and it is usually underestimated.

“High” means one thing in one entity and something looser in another.

“Highly complex substitutability” reflects a real market constraint in one team and a local scoring habit in another.

“Overarching arrangement” is a true contractual umbrella in one business line and a broad procurement label in another.

The field is filled. No one can compare it.

Group-wide comparability usually depends on one closed-list dictionary, one calibration, and documented local deviations where they genuinely exist.

What “credible after acceptance” looks like

A credible RoI is usable without offline reconstruction. The joins hold, the meanings hold, and the supervisory picture does not depend on someone explaining the register outside the register.

When those conditions fail, follow-up starts fast, usually because broken joins and inconsistent meanings have made the dataset harder to trust than the filing result suggests.